Loading Runtime

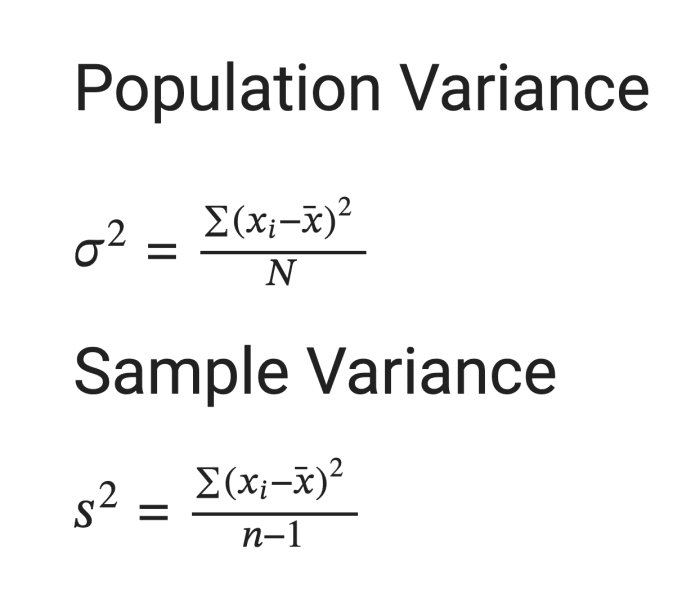

In statistics, variance is a measure of the spread or dispersion of a set of values. It quantifies how much individual data points in a dataset deviate from the mean (average) of the dataset. In other words, it provides a numerical measure of how scattered the data points are around the mean.

Key points about variance:

- Squared Differences: Variance involves squaring the differences between each data point and the mean. This squaring ensures that both positive and negative differences contribute to the overall measure of dispersion, and it amplifies the impact of larger deviations.

- Units: The variance is expressed in the square of the units of the original data. For example, if the data represents lengths in meters, the variance will be in square meters.

- Low Variance vs. High Variance: Low variance indicates that the data points tend to be close to the mean, suggesting a more concentrated or tightly clustered dataset. High variance suggests that the data points are more spread out from the mean, indicating greater variability or dispersion in the dataset.

- Standard Deviation: The square root of the variance is called the standard deviation (σ). It is often used as a more interpretable measure of dispersion because it is in the same units as the original data.

Variance is a fundamental concept in statistics and plays a crucial role in understanding the characteristics of a dataset. It is used in various statistical analyses and is a key component in the calculation of standard deviation and other measures of variability.